当前位置:网站首页>RuntimeError: Trying to backward through the graph a second time (or directly access saved variable

RuntimeError: Trying to backward through the graph a second time (or directly access saved variable

2022-06-25 17:39:00 【Reject ellipsis】

use pytorch This error occurred when , Write it down to avoid going into the pit again . Thanks for this pit, I have a clearer understanding of the use of the pre training model .

RuntimeError: Trying to backward through the graph a second time (or directly access saved variables after they have already been freed). Saved intermediate values of the graph are freed when you call .backward() or autograd.grad(). Specify retain_graph=True if you need to backward through the graph a second time or if you need to access saved variables after calling backward.

Simply put, the problem is that a variable with gradient information is executed once , The gradient information is released by the computational graph , Our code tries to access these variables during the second back propagation ( Gradient information ).

Catalog

reason

Everyone may have different reasons .

The reason I am here is to Embedding Written outside the training model cycle .

Here is my error code , This is the first code example .

You can see that the whole dictionary is first Embedding To train again , The above error will appear .

net = nn.Linear(32, 2)

Loss = nn.CrossEntropyLoss()

sample = torch.tensor([[1, 2, 3, 3],

[3, 2, 1, 5],

[4, 5, 9, 3]])

target = torch.ones((12,)).to(torch.long) # The category of each word

print(sample)

embedding = nn.Embedding(10, 32)

embed_sample = embedding(sample) # torch.Size([3, 4, 32])

net.train()

optimizer = torch.optim.Adam(net.parameters(), lr=1e-3)

for i in range(100):

pred = net(embed_sample) # torch.Size([3, 4, 2])

pred = pred.reshape(-1, 2) # torch.Size([12, 2])

loss = Loss(pred, target) # Calculate the loss

optimizer.zero_grad() # Zero gradient

loss.backward() # Back propagation

optimizer.step() # Update gradient

print(i+1, loss)

# Output

---------------------------------------------------------------------------

1 tensor(0.7125, grad_fn=<NllLossBackward>)

RuntimeError Traceback (most recent call last)

D:\Temp\ipykernel_8312\2990520637.py in <cell line: 3>()

7 #sum_loss # One epoch All losses and

8 optimizer.zero_grad() # Zero gradient

----> 9 loss.backward() # Back propagation

10 optimizer.step() # Update gradient

11 print(i+1,loss)

E:\anaconda\lib\site-packages\torch\_tensor.py in backward(self, gradient, retain_graph, create_graph, inputs)

253 create_graph=create_graph,

254 inputs=inputs)

--> 255 torch.autograd.backward(self, gradient, retain_graph, create_graph, inputs=inputs)

256

257 def register_hook(self, hook):

E:\anaconda\lib\site-packages\torch\autograd\__init__.py in backward(tensors, grad_tensors, retain_graph, create_graph, grad_variables, inputs)

145 retain_graph = create_graph

146

--> 147 Variable._execution_engine.run_backward(

148 tensors, grad_tensors_, retain_graph, create_graph, inputs,

149 allow_unreachable=True, accumulate_grad=True) # allow_unreachable flag

RuntimeError: Trying to backward through the graph a second time (or directly access saved variables after they have already been freed). Saved intermediate values of the graph are freed when you call .backward() or autograd.grad(). Specify retain_graph=True if you need to backward through the graph a second time or if you need to access saved variables after calling backward.

This is because the word embedding is static , We already released it when we first back propagated it , So when you enter the second cycle for back propagation , It's a mistake .

resolvent

The solution is easy , We just need to move it inside the loop . To the following code .

net = nn.Linear(32, 2)

Loss = nn.CrossEntropyLoss()

sample = torch.tensor([[1, 2, 3, 3],

[3, 2, 1, 5],

[4, 5, 9, 3]])

target = torch.ones((12,)).to(torch.long) # The category of each word

print(sample)

embedding = nn.Embedding(10, 32)

net.train()

optimizer = torch.optim.Adam(net.parameters(), lr=1e-3)

for i in range(100):

# Just write here

embed_sample = embedding(sample) #torch.Size([3, 4, 32])

pred = net(embed_sample) # torch.Size([3, 4, 2])

pred = pred.reshape(-1, 2) # torch.Size([12, 2])

loss = Loss(pred, target) # Calculate the loss

optimizer.zero_grad() # Zero gradient

loss.backward() # Back propagation

optimizer.step() # Update gradient

print(i+1, loss)

Embedding No training ?

The problem with this is Embedding() The parameters in do not participate in the training ( Self verifiable ), As for why ,

because optimizer = torch.optim.Adam(net.parameters(), lr=1e-3) Has not been added to embedding Parameters of .

The solution is to embedding Add the parameters of .optimizer =torch.optim.Adam(list(embedding.parameters())+list(net.parameters()), lr=1e-3)

( Underwater code )

net = nn.Linear(32, 2)

Loss = nn.CrossEntropyLoss()

sample = torch.tensor([[1, 2, 3, 3],

[3, 2, 1, 5],

[4, 5, 9, 3]])

target = torch.ones((12,)).to(torch.long) # The category of each word

print(sample)

embedding = nn.Embedding(10, 32)

print(list(embedding.parameters()))

net.train()

optimizer = torch.optim.Adam(list(embedding.parameters())+list(net.parameters()), lr=1e-3)

for i in range(100):

# Just write here

embed_sample = embedding(sample) #torch.Size([3, 4, 32])

pred = net(embed_sample) # torch.Size([3, 4, 2])

pred = pred.reshape(-1, 2) # torch.Size([12, 2])

loss = Loss(pred, target) # Calculate the loss

optimizer.zero_grad() # Zero gradient

loss.backward() # Back propagation

optimizer.step() # Update gradient

print(i+1, loss)

print(list(embedding.parameters())) # Compare the previous

Embedding Join directly in Net in

Look at all the above , I think everyone can think of , Which is to directly Embedding Layer directly added to net in

net = nn.Sequential(nn.Embedding(10, 32),

nn.Linear(32, 2))

Loss = nn.CrossEntropyLoss()

sample = torch.tensor([[1, 2, 3, 3],

[3, 2, 1, 5],

[4, 5, 9, 3]])

target = torch.ones((12,)).to(torch.long) # The category of each word

print(sample)

print(list(net[0].parameters())) # Namely Embedding Parameters of the layer

net.train()

optimizer = torch.optim.Adam(net.parameters(), lr=1e-3)

for i in range(100):

# Just write here

pred = net(sample) # torch.Size([3, 4, 2])

pred = pred.reshape(-1, 2) # torch.Size([12, 2])

loss = Loss(pred, target) # Calculate the loss

optimizer.zero_grad() # Zero gradient

loss.backward() # Back propagation

optimizer.step() # Update gradient

print(i+1, loss)

print(list(net[0].parameters())) # Compare the previous

inspire —— Don't use Embedding Gradient information

If I load a pre training model now , And don't want it to be involved Update gradient :

Then I'll just optimizer = torch.optim.Adam(net.parameters(), lr=1e-3) It would be good if this parameter were not added to the .

But in that case , The parameters of this pre training model will still participate in Back propagation In the process of ( Otherwise, why would an error be reported at the beginning ), This actually increases the cost of the computer , We want this pre training model Not involved in the back propagation process , That is, let it have no gradient information .

Method 1:

1. Use .detach Make input us net If only the word embedding vector of does not have the gradient information of the pre training model .( My words here are net(embed_sample.detach()))

( Rewater code )

net = nn.Linear(32, 2)

Loss = nn.CrossEntropyLoss()

sample = torch.tensor([[1, 2, 3, 3],

[3, 2, 1, 5],

[4, 5, 9, 3]])

target = torch.ones((12,)).to(torch.long) # The category of each word

print(sample)

embedding = nn.Embedding(10, 32)

embed_sample = embedding(sample) #torch.Size([3, 4, 32])

net.train()

optimizer = torch.optim.Adam(net.parameters(), lr=1e-3)

for i in range(100):

# Just write here

pred = net(embed_sample.detach()) # torch.Size([3, 4, 2])

pred = pred.reshape(-1, 2) # torch.Size([12, 2])

loss = Loss(pred, target) # Calculate the loss

optimizer.zero_grad() # Zero gradient

loss.backward() # Back propagation

optimizer.step() # Update gradient

print(i+1, loss)

Method 2:

2. Use with torch.no_grad() When we use the pre training model to generate the word embedding vector, the gradient information is not saved .

net = nn.Linear(32, 2)

Loss = nn.CrossEntropyLoss()

sample = torch.tensor([[1, 2, 3, 3],

[3, 2, 1, 5],

[4, 5, 9, 3]])

target = torch.ones((12,)).to(torch.long) # The category of each word

print(sample)

embedding = nn.Embedding(10, 32)

with torch.no_grad():

embed_sample = embedding(sample) #torch.Size([3, 4, 32])

net.train()

optimizer = torch.optim.Adam(net.parameters(), lr=1e-3)

for i in range(100):

# Just write here

pred = net(embed_sample) # torch.Size([3, 4, 2])

pred = pred.reshape(-1, 2) # torch.Size([12, 2])

loss = Loss(pred, target) # Calculate the loss

optimizer.zero_grad() # Zero gradient

loss.backward() # Back propagation

optimizer.step() # Update gradient

print(i+1, loss)

边栏推荐

- Can I open an account? Is it safe to open an account

- 相同wifi下,笔记本连接台式机上的虚拟机

- 观察者模式之通用消息发布与订阅

- 【编译原理】词法分析

- College Students' hot summer exchange, Rog star product phantom 16 flipped version / phantom 13 / phantom x appointment

- 杰理之定时器捕获(timer_cap.c)使用注意事项【篇】

- CGI connects to database through ODBC

- Langage d'assemblage (5) Registre (accès à la mémoire)

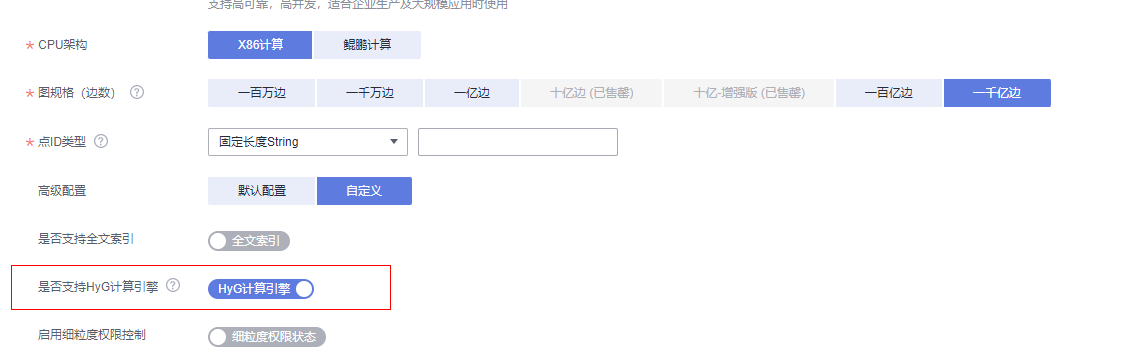

- Uncover ges super large scale graph computing engine hyg: Graph Segmentation

- LSF如何看job预留slot是否合理?

猜你喜欢

![[UVM practice== > episode_2] ~ VIP, VIP development, VIP release](/img/de/4befb321d5cf5b0aa630e9ad0fda2a.png)

[UVM practice== > episode_2] ~ VIP, VIP development, VIP release

杰理之如何给外界输出一个时钟源使用【篇】

揭秘GES超大规模图计算引擎HyG:图切分

上线移动ERP系统有哪些步骤?环环紧扣很重要

How does social e-commerce operate and promote?

智能对话01-redis的安装

Redis distributed lock collation

Learn Tai Chi Maker - mqtt (III) connect to mqtt server

相同wifi下,笔记本连接台式机上的虚拟机

数学建模——线性规划

随机推荐

CONDA modifying a mirror source

How does social e-commerce operate and promote?

CVPR small target detection: context and attention mechanisms improve small target detection (attached with paper Download)

CVPR小目标检测:上下文和注意力机制提升小目标检测(附论文下载)

How to solve the problem of network disconnection after enabling hotspot sharing in win10?

Automatic submission for the next education day

LSF如何看job预留slot是否合理?

揭秘GES超大规模图计算引擎HyG:图切分

WPF开发随笔收录-心电图曲线绘制

Learning Tai Chi makers - mqtt (I) what is mqtt

HMS Core机器学习服务实现同声传译,支持中英文互译和多种音色语音播报

Jerry's system clock setting is reset or invalid [chapter]

Bert's summary of me

杰理之SPI 从机使用注意事项【篇】

智能对话01-redis的安装

Utilisation de diskgenius pour augmenter la capacité du disque système C

杰理之定时器使用注意事项【篇】

通过深度可分离卷积神经网络对七种表情进行区分

RuntimeError: Trying to backward through the graph a second time (or directly access saved variable

WARNING: Unsupported upgrade request.