当前位置:网站首页>Architecture evolution of high-performance servers -- Suggestions

Architecture evolution of high-performance servers -- Suggestions

2022-06-25 15:24:00 【Running pig ZC】

Catalog

The way of architecture evolution

Write in the front

For back-end development , High concurrency is an old topic , Recently, the author encountered a very thorny problem , It's about high concurrency , At present, it is in support of 30W Terminals , But it will continue to grow in the later period , When I hear to support 500W When , I feel like I will have a bald head soon , But don't panic , There is always a way , I checked various materials and consulted several big men , Solved the problem step by step , Next, I will share how to build a high-performance , Highly concurrent servers .

The way of architecture evolution

1: The simplest --- Direct access tomcat Single application , Basically no problem

2: in consideration of tomcat Handle task Upper limit ( Plus tuning ) I still can't bear it , use nginx Load balancing , There should be no problem compared with this

3: Find this time DB The pressure will be greater , So I made a separation of reading and writing , No problem

4: Even if it is read-write separation , But now a large number of streams are generally queries ,DB There is still a bottleneck , You can consider DB colony ( at present mysql Has supported ), Skip here , Caching is considered in this article ( Now the projects are basically distributed , Then consider distributed caching ), So the architecture diagram becomes a dead end

5:4 It just basically solves the problem of reading , But what if it's written ? I'm not going straight to DB Write , Every time I write ? It doesn't exist well , Consider using caching , When the data is accumulated to a certain amount , Write the data once again DB, Or is it ES( After my test ,ES Write performance is really low , But reading is really powerful , Basically, it can be counted G Data in 100ms Back inside .), Use here JVM The cache of , You may need a global clock . Then the architecture diagram looks like this

6: Think it's over here ? As a person who is about to have a bald head , We must not stop here , So consider whether you can not let the request return results without passing through the background server ? So there was a great roar OpenResty(nginx Enhanced version of , The original author is Russian , The Chinese will strengthen and upgrade it , Add a series of plug-ins , And open source , at present JD Use this to cache product details ), Currently used in the project OpenResty Of Cache( operation nginx The cache of , Expiration time can be set , Cache size , Strategy and so on ),mysql( Can be operated directly mysql),redis( Can operate redis) modular , Cooperate with Lua Script together , The result is really fragrant , Then the architecture diagram evolves into the following ghost , It looks a little complicated anyway .

summary

There are many high concurrency problems in the project , Many solutions can be considered , For example, a single body cannot , I just distributed + colony , If not, consider caching , Caching can be divided into two types , Server cache and web Layer cache , Then you can optimize the code , Unnecessary synchronization can be changed to asynchronism , Use thread pool , The parameters of the thread pool can be tuned , Reference resources https://blog.csdn.net/weixin_45191798/article/details/100153524 , When writing for a large amount of data traffic , We can take into account the so-called tens of millions of KAFKA To cut the flow peak , Finally, it's really no good , With a bald head , Growing cabbages and raising pigs at home is also an option ( Pork is still more expensive , The way to get rich ), like JAVA And architecture students can discuss , AC duck .

边栏推荐

- Generation method and usage of coredump

- Qlogsystem log system configuration use

- 5 connection modes of QT signal slot

- Basic syntax and common commands of R language

- Disable scrolling in the iPhone web app- Disable scrolling in an iPhone web application?

- 55 specific ways to improve program design (2)

- Solve valueerror: invalid literal for int() with base 10

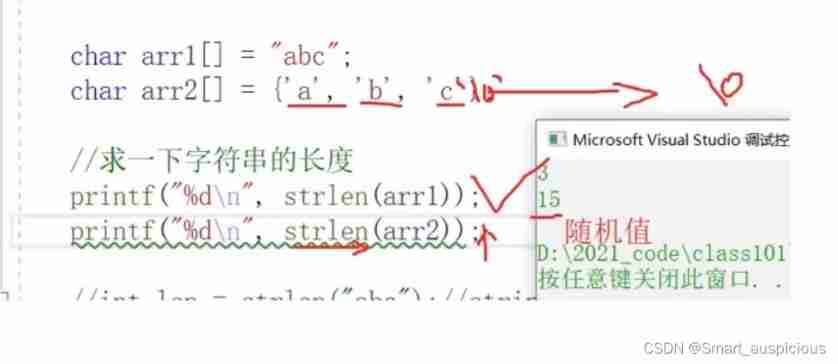

- The difference between sizeof and strlen

- iconv_ Open returns error code 22

- Stack and queue

猜你喜欢

Learning notes on February 5, 2022 (C language)

JMeter reading and writing excel requires jxl jar

![[paper notes] poly yolo: higher speed, more precise detection and instance segmentation for yolov3](/img/28/6d58759a4a4b18923a5ed5ed573956.jpg)

[paper notes] poly yolo: higher speed, more precise detection and instance segmentation for yolov3

Iterator failure condition

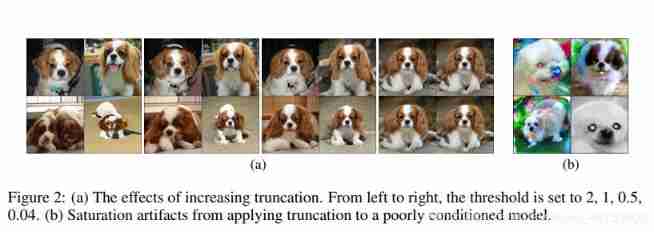

Paddlepaddle paper reproduction course biggan learning experience

google_ Breakpad crash detection

Review of arrays and pointers triggered by a topic

How to download and install Weka package

Learning notes on February 8, 2022 (C language)

Common dynamic memory errors

随机推荐

Errno perrno and strerrno

QT database connection

Efficient pytorch: how to eliminate training bottlenecks

How to download and install Weka package

5 connection modes of QT signal slot

Graphic control and layout basis of R visualization

QT animation loading and closing window

Review of arrays and pointers triggered by a topic

[C language] implementation of magic square array (the most complete)

Yolov5 Lite: fewer parameters, higher accuracy and faster detection speed

2. operator and expression multiple choice questions

[paper notes] street view change detection with deconvolutional networks

Luogu p5707 [deep foundation 2. example 12] late for school

Leetcode123 timing of buying and selling stocks III

(1) Introduction

Cross compilation correlation of curl Library

QT source code online view

[paper notes] poly yolo: higher speed, more precise detection and instance segmentation for yolov3

2.18 codeforces supplement

One code per day - day one