当前位置:网站首页>tf.contrib.layers.batch_norm

tf.contrib.layers.batch_norm

2022-06-24 09:40:00 【Wanderer001】

参考 tf.contrib.layers.batch_norm - 云+社区 - 腾讯云

Adds a Batch Normalization layer from http://arxiv.org/abs/1502.03167

tf.contrib.layers.batch_norm(

inputs,

decay=0.999,

center=True,

scale=False,

epsilon=0.001,

activation_fn=None,

param_initializers=None,

param_regularizers=None,

updates_collections=tf.GraphKeys.UPDATE_OPS,

is_training=True,

reuse=None,

variables_collections=None,

outputs_collections=None,

trainable=True,

batch_weights=None,

fused=None,

data_format=DATA_FORMAT_NHWC,

zero_debias_moving_mean=False,

scope=None,

renorm=False,

renorm_clipping=None,

renorm_decay=0.99,

adjustment=None

)

"Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift"

Sergey Ioffe, Christian Szegedy

Can be used as a normalizer function for conv2d and fully_connected. The normalization is over all but the last dimension if data_format is NHWC and all but the second dimension if data_format is NCHW. In case of a 2D tensor this corresponds to the batch dimension, while in case of a 4D tensor this corresponds to the batch and space dimensions.

Note: when training, the moving_mean and moving_variance need to be updated. By default the update ops are placed in tf.GraphKeys.UPDATE_OPS, so they need to be added as a dependency to the train_op. For example:

update_ops = tf.compat.v1.get_collection(tf.GraphKeys.UPDATE_OPS)

with tf.control_dependencies(update_ops):

train_op = optimizer.minimize(loss)

One can set updates_collections=None to force the updates in place, but that can have a speed penalty, especially in distributed settings.

Args:

inputs: A tensor with 2 or more dimensions, where the first dimension hasbatch_size. The normalization is over all but the last dimension ifdata_formatisNHWCand the second dimension ifdata_formatisNCHW.decay: Decay for the moving average. Reasonable values fordecayare close to 1.0, typically in the multiple-nines range: 0.999, 0.99, 0.9, etc. Lowerdecayvalue (recommend tryingdecay=0.9) if model experiences reasonably good training performance but poor validation and/or test performance. Try zero_debias_moving_mean=True for improved stability.center: If True, add offset ofbetato normalized tensor. If False,betais ignored.scale: If True, multiply bygamma. If False,gammais not used. When the next layer is linear (also e.g.nn.relu), this can be disabled since the scaling can be done by the next layer.epsilon: Small float added to variance to avoid dividing by zero.activation_fn: Activation function, default set to None to skip it and maintain a linear activation.param_initializers: Optional initializers for beta, gamma, moving mean and moving variance.param_regularizers: Optional regularizer for beta and gamma.updates_collections: Collections to collect the update ops for computation. The updates_ops need to be executed with the train_op. If None, a control dependency would be added to make sure the updates are computed in place.is_training: Whether or not the layer is in training mode. In training mode it would accumulate the statistics of the moments intomoving_meanandmoving_varianceusing an exponential moving average with the givendecay. When it is not in training mode then it would use the values of themoving_meanand themoving_variance.reuse: Whether or not the layer and its variables should be reused. To be able to reuse the layer scope must be given.variables_collections: Optional collections for the variables.outputs_collections: Collections to add the outputs.trainable: IfTruealso add variables to the graph collectionGraphKeys.TRAINABLE_VARIABLES(see tf.Variable).batch_weights: An optional tensor of shape[batch_size], containing a frequency weight for each batch item. If present, then the batch normalization uses weighted mean and variance. (This can be used to correct for bias in training example selection.)fused: ifNoneorTrue, use a faster, fused implementation if possible. IfFalse, use the system recommended implementation.data_format: A string.NHWC(default) andNCHWare supported.zero_debias_moving_mean: Use zero_debias for moving_mean. It creates a new pair of variables 'moving_mean/biased' and 'moving_mean/local_step'.scope: Optional scope forvariable_scope.renorm: Whether to use Batch Renormalization (https://arxiv.org/abs/1702.03275). This adds extra variables during training. The inference is the same for either value of this parameter.renorm_clipping: A dictionary that may map keys 'rmax', 'rmin', 'dmax' to scalarTensorsused to clip the renorm correction. The correction(r, d)is used ascorrected_value = normalized_value * r + d, withrclipped to [rmin, rmax], anddto [-dmax, dmax]. Missing rmax, rmin, dmax are set to inf, 0, inf, respectively.renorm_decay: Momentum used to update the moving means and standard deviations with renorm. Unlikemomentum, this affects training and should be neither too small (which would add noise) nor too large (which would give stale estimates). Note thatdecayis still applied to get the means and variances for inference.adjustment: A function taking theTensorcontaining the (dynamic) shape of the input tensor and returning a pair (scale, bias) to apply to the normalized values (before gamma and beta), only during training. For example,adjustment = lambda shape: ( tf.random.uniform(shape[-1:], 0.93, 1.07), tf.random.uniform(shape[-1:], -0.1, 0.1))will scale the normalized value by up to 7% up or down, then shift the result by up to 0.1 (with independent scaling and bias for each feature but shared across all examples), and finally apply gamma and/or beta. IfNone, no adjustment is applied.

Returns:

- A

Tensorrepresenting the output of the operation.

Raises:

ValueError: Ifdata_formatis neitherNHWCnorNCHW.ValueError: If the rank ofinputsis undefined.ValueError: If rank or channels dimension ofinputsis undefined.

边栏推荐

猜你喜欢

简单的价格表样式代码

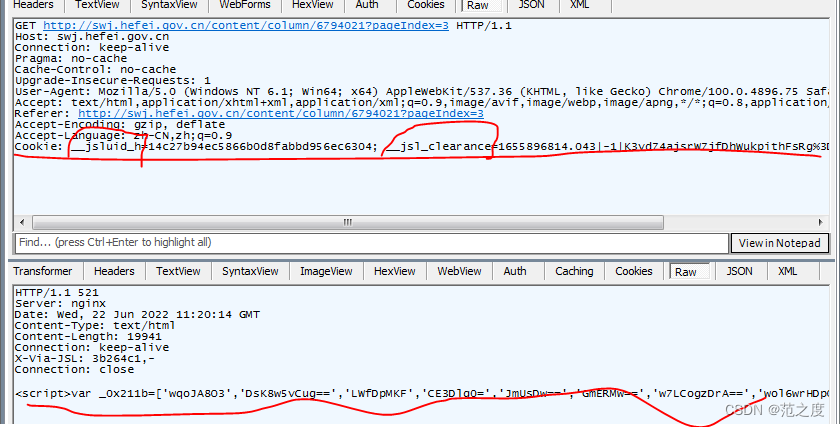

Cookie encryption 4 RPC method determines cookie encryption

Indexeddb local storage, homepage optimization

二叉树第一部分

js单例模式

Programming questions (continuously updated)

GeoGebra 实例 时钟

LeetCode: 240. Search 2D matrix II

居家办公如何管理数据中心网络基础设施?

Practical analysis: implementation principle of APP scanning code landing (app+ detailed logic on the web side) with source code

随机推荐

port 22: Connection refused

Arbre binaire partie 1

PostgreSQL DBA快速入门-通过源码编译安装

415 binary tree (144. preorder traversal of binary tree, 145. postorder traversal of binary tree, 94. inorder traversal of binary tree)

Desktop software development framework reward

被困英西中学的师生安全和食物有保障

SQL sever基本数据类型详解

416 binary tree (first, middle and last order traversal iteration method)

CICFlowMeter源码分析以及为满足需求而进行的修改

What are the characteristics of EDI local deployment and cloud hosting solutions?

MySQL data advanced

美国电子烟巨头 Juul 遭遇灭顶之灾,所有产品强制下架

Queue queue

NVIDIA's CVPR 2022 oral is on fire! 2D images become realistic 3D objects in seconds! Here comes the virtual jazz band!

Troubleshooting steps for Oracle pool connection request timeout

TP5 using post to receive array data times variable type error: solution to array error

[Eureka source code analysis]

canvas无限扫描js特效代码

Cookie encryption 4 RPC method determines cookie encryption

How do novices choose the grade of investment and financial products?