当前位置:网站首页>Realization of neural networks with numpy

Realization of neural networks with numpy

2022-06-25 13:50:00 【Xiaoshuai acridine】

List of articles

Original title :Let’s code a Neural Network in plain NumPy

Original author :Piotr Skalski

Link to the original text : https://medium.com/towards-data-science/lets-code-a-neural-network-in-plain-numpy-ae7e74410795

Use something like Keras、TensorFlow or PyTorch Such a high-level framework allows us to quickly build very complex models . However , It's worth taking the time to look inside and understand the underlying concepts . not long ago , I published an article , A simple way to explain how neural networks work . However , This is a highly theoretical position , Mainly devoted to mathematics , This is the source of the super power of neural networks . From the beginning, I intend to follow up this topic in a more practical way . This time, , We will try to use our knowledge , Use only NumPy Build a fully operational neural network . Last , We will also test our model - Solve simple classification problems , And compare its performance with Keras The established neural networks are compared .

Figure 1. Example of dense neural network architecture

First things first

Before we start programming , Let's stop and prepare a basic roadmap . Our goal is to create a program , Ability to create a densely connected neural network with a specified Architecture ( Number and size of layers and appropriate activation functions ). The figure above shows an example of such a network . most important of all , We must be able to train our network and use it for forecasting .

Figure 2. Neural network blueprint

The figure above shows the NN What needs to be done during the training . It also shows how many parameters we need to update and read at different stages of a single iteration . Building the right data structure and skillfully managing its state is one of the most difficult parts of our task . Due to time constraints , I will not describe in detail the role of each parameter in the diagram . I suggest that all interested people read the First article , I hope you can find the answers to all the questions that perplex you .

Figure 3. Dimensions of weight matrix W and bias vector b for layer l.

Initiation of neural network layers

First initialize the weight matrix for each layer W And the bias vector b. In the diagram above , I have prepared a small test preparation form , It will help us assign appropriate dimensions to these coefficients . Superscript [l] Represents the index of the current layer ( from 1 Start counting ),n Represents the number of cells in a given layer . My hypothetical description NN The architecture information will be delivered to our program in the form of a list , Similar to code snippets 1 The list shown in . Each item in the list is a dictionary that describes the basic parameters of a single network layer :input_dim— The size of the signal vector provided as an input to this layer ,output_dim— The size of the activation vector obtained at the output of the layer , and activation— The activation function to be used inside the layer .

nn_architecture = [

{

"input_dim": 2, "output_dim": 4, "activation": "relu"},

{

"input_dim": 4, "output_dim": 6, "activation": "relu"},

{

"input_dim": 6, "output_dim": 6, "activation": "relu"},

{

"input_dim": 6, "output_dim": 4, "activation": "relu"},

{

"input_dim": 4, "output_dim": 1, "activation": "sigmoid"},

]

Snippet 1. A list containing parameters describing a particular neural network. This list corresponds to the NN shown in Figure 1.

If you are familiar with this topic , You may have heard a voice in your mind saying anxiously :“ well , well ! Things are wrong ! Some fields are unnecessary ……” Yeah , Your inner voice is right this time . The vector coming out of one layer is also the input of the next layer , So in fact , Just knowing the size of one of these vectors is enough . However , I deliberately decided to use the following symbols to keep the objects of all layers consistent , And make the code easier to understand for the first time .

def init_layers(nn_architecture, seed = 99):

np.random.seed(seed)

number_of_layers = len(nn_architecture)

params_values = {

}

for idx, layer in enumerate(nn_architecture):

layer_idx = idx + 1

layer_input_size = layer["input_dim"]

layer_output_size = layer["output_dim"]

params_values['W' + str(layer_idx)] = np.random.randn(

layer_output_size, layer_input_size) * 0.1

params_values['b' + str(layer_idx)] = np.random.randn(

layer_output_size, 1) * 0.1

return params_values

Snippet 2. The function that initiates the values of the weight matrices and bias vectors.

Let's finally focus on the main tasks that must be accomplished in this section — Initialization of layer parameters . Those who have seen code snippets 2 Also on NumPy People with some experience have noticed the matrix W Sum vector b Be filled into small 、 Random numbers . This is no accident . The weight value cannot be initialized with the same number , Because it can lead to The problem of breaking symmetry . Basically , If all the weights are the same , So whatever you enter X What is it? , All neurons in the hidden layer will also be the same . In a way , We are in the initial state , There is no hope of escape , No matter how long we have to train our model , How deep is our network . Linear algebra cannot forgive .

In the first iteration , Using small values can improve the efficiency of the algorithm . to glance at sigmoid Graph of function , Pictured 4 Shown , We can see , For large values , It is almost flat , This is for our NN Has a significant impact on the learning speed of . All in all , Using small random numbers to initialize parameters is a simple way , But it ensures that our algorithm has a good starting point . The prepared parameter values are stored in python In the dictionary , Their key values uniquely identify their parent . The dictionary returns... At the end of the function , So we will access its contents in the next phase of the algorithm .

Activation functions

All the functions we will use , There are some very simple but powerful . The activation function can be written in one line of code , But they provide neural networks with the required nonlinearity and expressiveness . “ Without them , Our neural network will become a combination of linear functions , So it's just a linear function .” There are many activation functions , But in this project , I decided to offer the possibility of using two of them - sigmoid and ReLU. In order to be able to go around and propagate forward and backward at the same time , We also need to prepare their derivatives .

def sigmoid(Z):

return 1/(1+np.exp(-Z))

def relu(Z):

return np.maximum(0,Z)

def sigmoid_backward(dA, Z):

sig = sigmoid(Z)

return dA * sig * (1 - sig)

def relu_backward(dA, Z):

dZ = np.array(dA, copy = True)

dZ[Z <= 0] = 0;

return dZ;

Snippet 3. ReLU and Sigmoid activation functions and their derivatives.

Forward propagation

The designed neural network has a simple structure . Information flows in one direction —— It uses X The formal transfer of matrix , Then through the hidden layer of neurons , produce Y_hat Prediction vector . For easy reading , I divide forward propagation into two separate functions —— Forward propagation of a single layer , To the whole NN Forward propagation .

def single_layer_forward_propagation(A_prev, W_curr, b_curr, activation="relu"):

Z_curr = np.dot(W_curr, A_prev) + b_curr

if activation is "relu":

activation_func = relu

elif activation is "sigmoid":

activation_func = sigmoid

else:

raise Exception('Non-supported activation function')

return activation_func(Z_curr), Z_curr

Snippet 4. Single layer forward propagation step

This part of the code is probably the easiest to understand . Given the input signal of the previous layer , We compute affine transformations Z, Then apply the selected activation function . By using NumPy, We can use vectorization —— Perform matrix operations on the entire layer and batch samples . This eliminates iterations , It greatly speeds up our calculation . In addition to the calculated matrix A, Our function also returns an intermediate value z. The answer is shown in the figure 2 Shown , stay backward We need to Z.

Figure 5. Dimensions of individual matrices used in a forward step.

Use a prepared layer of forward functions , We can now easily build a complete forward propagation step . This is a slightly more complex function , Its role is not just to execute forecasts , It also organizes a set of intermediate values . It returns Python Dictionaries , It contains... Calculated for a specific layer A and Z value .

def full_forward_propagation(X, params_values, nn_architecture):

memory = {

}

A_curr = X

for idx, layer in enumerate(nn_architecture):

layer_idx = idx + 1

A_prev = A_curr

activ_function_curr = layer["activation"]

W_curr = params_values["W" + str(layer_idx)]

b_curr = params_values["b" + str(layer_idx)]

A_curr, Z_curr = single_layer_forward_propagation(A_prev, W_curr, b_curr, activ_function_curr)

memory["A" + str(idx)] = A_prev

memory["Z" + str(layer_idx)] = Z_curr

return A_curr, memory

Snippet 5. Full forward propagation step

Loss function

To monitor our progress and ensure that we are moving in the right direction , We should periodically calculate the value of the loss function . “ Generally speaking , The loss function is designed to show that we are far from ‘ Ideal ’ How far is the solution .” It is chosen according to the problems we plan to solve , image Keras There are many options for such a framework . Because I'm going to test our NN Classify the points between two classes , So I decided to use binary cross entropy , It is defined by the following formula . To get more information about the learning process , I also decided to implement a function of computational accuracy .

def get_cost_value(Y_hat, Y):

m = Y_hat.shape[1]

cost = -1 / m * (np.dot(Y, np.log(Y_hat).T) + np.dot(1 - Y, np.log(1 - Y_hat).T))

return np.squeeze(cost)

def get_accuracy_value(Y_hat, Y):

Y_hat_ = convert_prob_into_class(Y_hat)

return (Y_hat_ == Y).all(axis=0).mean()

Snippet 6. Calculating the value of the cost function and accuracy

Backward propagation

Back propagation is regarded by many inexperienced deep learning enthusiasts as a daunting and difficult algorithm . The combination of differential calculus and linear algebra often discourages people without solid mathematical training . So if you can't understand everything right away , Don't worry too much . believe me , We've all experienced .

def single_layer_backward_propagation(dA_curr, W_curr, b_curr, Z_curr, A_prev, activation="relu"):

m = A_prev.shape[1]

if activation is "relu":

backward_activation_func = relu_backward

elif activation is "sigmoid":

backward_activation_func = sigmoid_backward

else:

raise Exception('Non-supported activation function')

dZ_curr = backward_activation_func(dA_curr, Z_curr)

dW_curr = np.dot(dZ_curr, A_prev.T) / m

db_curr = np.sum(dZ_curr, axis=1, keepdims=True) / m

dA_prev = np.dot(W_curr.T, dZ_curr)

return dA_prev, dW_curr, db_curr

People often confuse back propagation with gradient descent , But in fact, these are two independent problems . The purpose of the first method is to calculate the gradient effectively , The second method is to use the calculated gradient for optimization . In the neural network , We calculate the loss function ( As we discussed earlier ) On the gradient of parameters , But back propagation can be used to calculate the derivative of any function . The essence of this algorithm is to recursively use a chain rule known from differential calculus —— Calculates the derivative of a function created by combining other functions , We already know the derivatives of these functions . For a network layer , This process can be described by the following formula . Unfortunately , Since this article focuses on the actual implementation , So the derivation process is omitted . Look at these formulas , We decided to remember the middle tier A and Z Matrix value The reason becomes obvious .

Figure 6. Forward and backward propagation for a single layer.

Just like in the case of forward propagation , I decided to split the calculation into two separate functions . The first code snippet ( Such as code snippets 7 Shown ) Focus on a single layer , And it comes down to NumPy Rewrite the above formula in . The second means full backward propagation , It mainly deals with reading and updating parameter values in three dictionaries . We first calculate the derivative of the loss function with respect to the prediction vector —— The result of forward propagation . It's very simple , Because it only contains rewriting the following formula . Then traverse the network layers from beginning to end , According to the figure 6 Find the derivative of each parameter as shown in . Final , The function returns a python Dictionaries , It contains the gradient we are looking for .

def full_backward_propagation(Y_hat, Y, memory, params_values, nn_architecture):

grads_values = {

}

m = Y.shape[1]

Y = Y.reshape(Y_hat.shape)

dA_prev = - (np.divide(Y, Y_hat) - np.divide(1 - Y, 1 - Y_hat));

for layer_idx_prev, layer in reversed(list(enumerate(nn_architecture))):

layer_idx_curr = layer_idx_prev + 1

activ_function_curr = layer["activation"]

dA_curr = dA_prev

A_prev = memory["A" + str(layer_idx_prev)]

Z_curr = memory["Z" + str(layer_idx_curr)]

W_curr = params_values["W" + str(layer_idx_curr)]

b_curr = params_values["b" + str(layer_idx_curr)]

dA_prev, dW_curr, db_curr = single_layer_backward_propagation(

dA_curr, W_curr, b_curr, Z_curr, A_prev, activ_function_curr)

grads_values["dW" + str(layer_idx_curr)] = dW_curr

grads_values["db" + str(layer_idx_curr)] = db_curr

return grads_values

Snippet 8. Full backward propagation step

Updating parameters values

The objective of this method is to update the network parameters by gradient optimization . In this way , We try to make our objective function closer to the minimum . In order to accomplish this task , We will use two dictionaries that provide function parameters :params_values, It stores the current value of the parameter , as well as grads_values, It stores the derivatives of the loss function calculated from these parameters . Now you just need to apply the following equation to each layer . This is a very simple optimization algorithm , But I decided to use it , Because it is a good starting point for more advanced optimizers , This may be the subject of my next article .

def update(params_values, grads_values, nn_architecture, learning_rate):

for layer_idx, layer in enumerate(nn_architecture):

params_values["W" + str(layer_idx)] -= learning_rate * grads_values["dW" + str(layer_idx)]

params_values["b" + str(layer_idx)] -= learning_rate * grads_values["db" + str(layer_idx)]

return params_values;

Snippet 9. Updating parameters values using gradient descent

Putting things together

The most difficult part of the task is over —— We have all the necessary functions ready , Now we just need to put them together in the right order . To better understand the sequence of operations , It is necessary to review the diagram again 2 Chart in . This function returns the training result and the optimization weight obtained from the historical change of the index during the training . In order to make predictions , You just need to run a complete forward propagation using the received weight matrix and a set of test data .

def train(X, Y, nn_architecture, epochs, learning_rate):

params_values = init_layers(nn_architecture, 2)

cost_history = []

accuracy_history = []

for i in range(epochs):

Y_hat, cashe = full_forward_propagation(X, params_values, nn_architecture)

cost = get_cost_value(Y_hat, Y)

cost_history.append(cost)

accuracy = get_accuracy_value(Y_hat, Y)

accuracy_history.append(accuracy)

grads_values = full_backward_propagation(Y_hat, Y, cashe, params_values, nn_architecture)

params_values = update(params_values, grads_values, nn_architecture, learning_rate)

return params_values, cost_history, accuracy_history

Snippet 10. Training a model

David vs Goliath

It's time to see if our model can solve a simple classification problem . I generated a dataset of points belonging to two classes , Pictured 7 Shown . Let's try to teach our model to classify the points belonging to this distribution . For comparison , I also prepared a high-level framework model ——Keras. The two models have the same architecture and learning rate . however , This is indeed an uneven battle , Because the implementation we are preparing is probably the simplest implementation . Final ,NumPy and Keras The model achieves similar results in the test set 95% The accuracy of . However , Our model took dozens of times to achieve this result . in my opinion , This state is mainly due to the lack of proper optimization .

Figure 7. Test dataset

Figure 8. Visualisation of the classification boundaries achieved with both models

边栏推荐

- 楼宇自动化专用BACnet网关BL103

- go---- mgo

- 语法'陷阱'

- Explain the possible memory leaks caused by the handler at one time and the solutions | the developer said · dtalk

- [open source Hongmeng system display] the rk3568 development board is equipped with openharmony 3.1 release

- Drago Education - typescript learning

- Table de hachage, conflit de hachage

- Cesium--- figure loading

- 历史上的今天:网易成立;首届消费电子展召开;世界上第一次网络直播

- 多臺雲服務器的 Kubernetes 集群搭建

猜你喜欢

leetcode:剑指 Offer II 091. 粉刷房子【二维dp】

Custom vertical table

The priority of catch() and then (..., ERR) of promise

When the input tag type is number, the input of E, e, -, + is blocked

网络远程访问的方式使用树莓派

Some knowledge of the initial C language

Numpy库使用入门

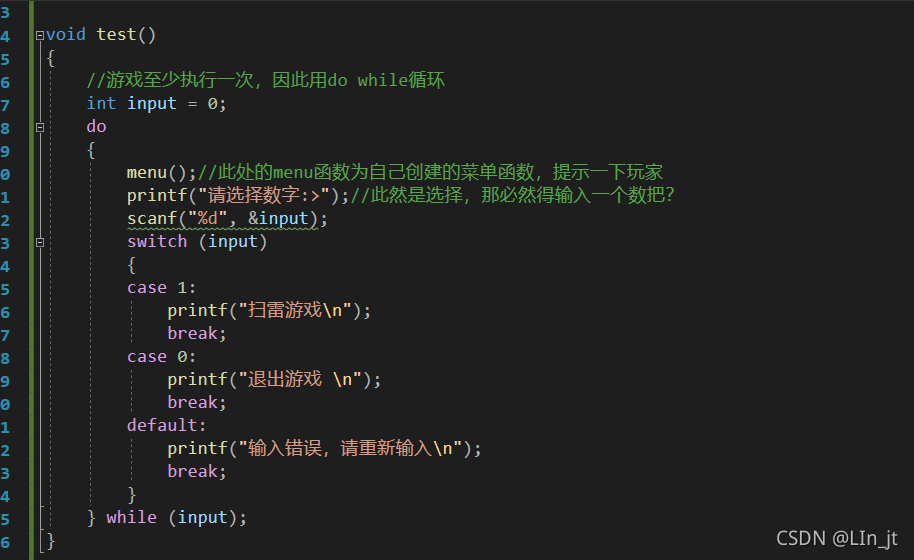

Simple realization of mine sweeping

Gorm---- Association query

解决报错:Creating window glfw ERROR: GLEW initalization error: Missing GL version

随机推荐

Component: is to switch between multiple components

楼宇自动化专用BACnet网关BL103

How unity makes the UI intercept click events

Error1822 and error1824 are displayed in the database

如何在 2022 年为 Web 应用程序选择技术堆栈

分类器与cross entropy loss函数

leetcode:剑指 Offer II 091. 粉刷房子【二维dp】

历史上的今天:网易成立;首届消费电子展召开;世界上第一次网络直播

Class usage and inheritance in ES6

[open source Hongmeng system display] the rk3568 development board is equipped with openharmony 3.1 release

Discriminative v.s.Generative

Related examples of data storage in memory

哈希錶、哈希沖突

[pit avoidance means "difficult"] antd select fuzzy search

Is it unsafe to make new debts

The starting point for learning programming.

Syntax 'trap'

关于猜数字游戏的实现

關於一道教材題的講解

Data acquisition system gateway acquisition plant efficiency